Autonomous Vehicle eHMI Study

Dissertation · Research Study · UX Research · eHMI · Autonomous VehiclesThis dissertation explored how older pedestrians interpret external Human Machine Interfaces (eHMIs) in autonomous vehicle yielding scenarios, comparing visual, audio, and haptic cues to understand which combinations best reduced uncertainty and improved confidence, clarity, and perceived safety.

TL;DR

- Solo dissertation at Birmingham City University

- Designed and ran a study comparing visual, audio, and haptic eHMI combinations to understand which best supported older pedestrians crossing in front of autonomous vehicles

- Awarded 1st Place at the PGXPO and Research Excellence Award

Challenge

Designing eHMI Communication for Older Pedestrians

Autonomous vehicles must communicate their intent to yield clearly without the social cues pedestrians usually rely on, such as eye contact, hand gestures, or engine noise. This creates genuine uncertainty at crossings, particularly for older adults who may already feel less confident navigating modern traffic environments.

This dissertation examined how older adults interpret and respond to multimodal eHMI signals, and which combinations of visual, audio, and haptic cues best support safe, confident crossing decisions in AV yielding scenarios.

Overview

Project at a Glance

- Role: UX Researcher (MSc dissertation, end-to-end)

- Audience: Older pedestrians

- Goal: Identify which eHMI modality (or combination) best supports clarity, confidence, and correct yielding interpretation

- Approach: Controlled prototype stimuli and participant feedback to turn results into practical design guidance

- Outputs: Ranked modality preferences, insights from interviews, and actionable recommendations for AV yielding communication

Research Key Questions

- Which eHMI modality (or combination) best supports interpretation of yielding intent?

- Do multimodal combos increase confidence over single cues?

- What do older pedestrians find confusing or reassuring?

Study Design

- Participants: n = 10 older adults

- Design: Within-subject comparing 8 conditions

- Measures: Participant ratings (including preference ranking) + short interviews to capture reasoning

- Analysis: Quantitative comparison across conditions, paired with qualitative themes to explain why certain cues worked better

- Tools: SPSS / Excel / bHaptics Designer / bHaptics TacSuit

Study Procedure

Participants were welcomed and introduced to the study before beginning a singular session lasting approximately 30 to 45 minutes.

- Reviewed the information sheet and consent form

- Explained the study procedure and right to withdraw

- Fitted the bHaptics TactSuit and ran a short test vibration

- Completed 8 condition blocks, each containing 4 videos

- Each block included 2 yielding and 2 non-yielding AV scenarios

- Collected a 26-item UEQ after each condition block

- Offered short breaks between conditions if needed

- Finished with a semi-structured interview

- Asked to rank visual, haptic, and audio modalities and explain their preferences

Modality Overview (eHMIs)

Process

A Research-Led Design Approach

Discover

- Reviewed literature on AV-pedestrian communication, eHMIs, and age-related perception needs for older pedestrians.

- Identified a core gap: yielding intent is often ambiguous without driver cues (eye contact, gestures), increasing uncertainty for older users.

Define

- Problem statement: "How might we communicate AV yielding intent clearly and confidently to older pedestrians using multimodal eHMI cues?"

- Defined study variables, modality conditions (Visual, Audio, Haptics, and combinations) and key success metrics: clarity, confidence, perceived safety, and overall UX.

Develop

- Designed the eHMI haptic condition and standardised stimuli for a yielding scenario with consistent timing and presentation across all 8 conditions.

- Built the test flow and materials: consent forms, task instructions, UEQ measures, and a semi-structured interview for preference capture.

- Piloted and refined to reduce bias, improve clarity, and keep sessions consistent.

Deliver

- Conducted moderated sessions with 10 older participants using a within-subjects comparison across eHMI conditions.

- Analysed quantitative UEQ results and triangulated with qualitative feedback to form recommendations.

- Produced practical design guidance and next-step validation ideas (larger sample, higher-fidelity simulation, multi-vehicle scenarios).

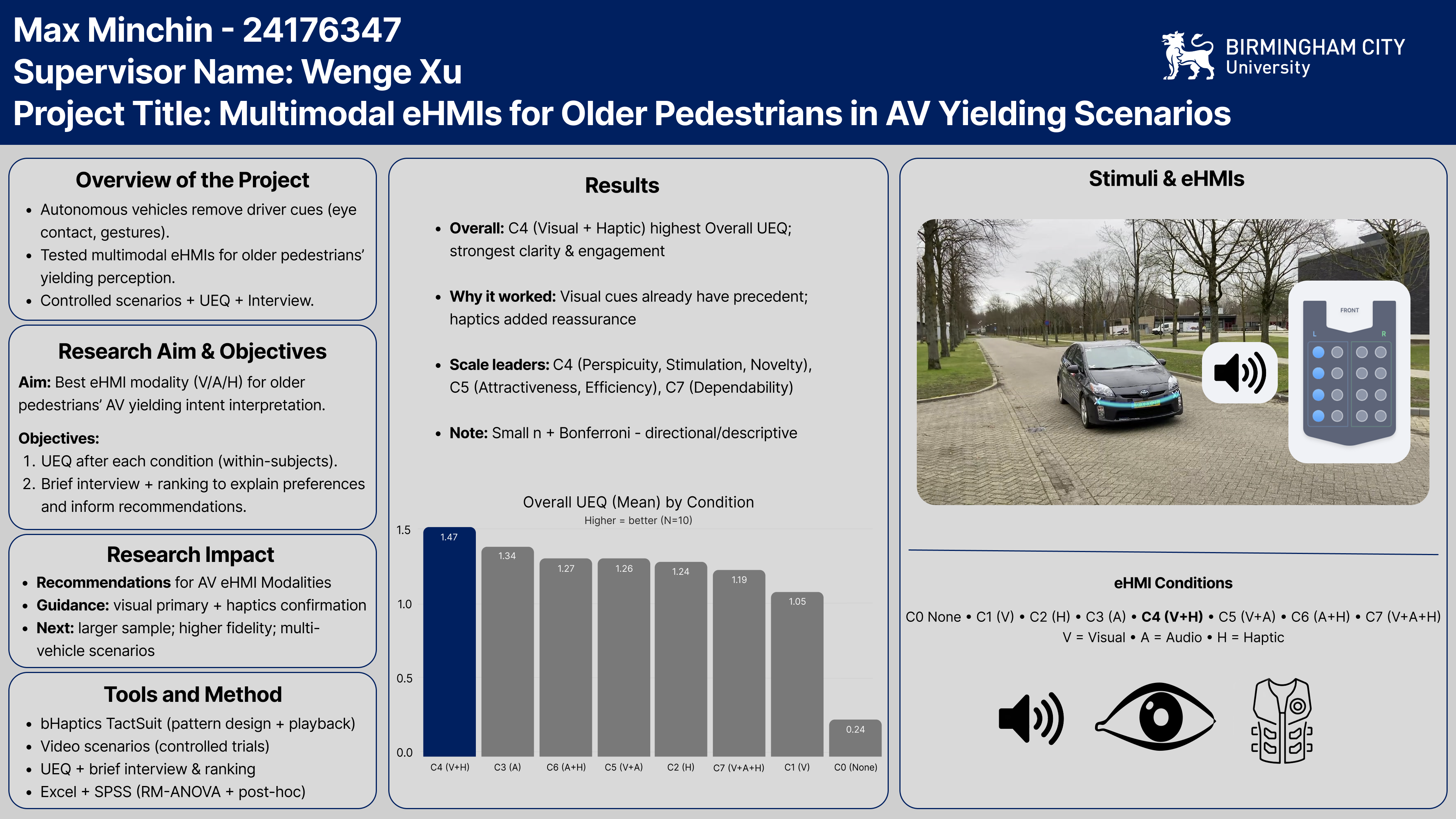

PGXPO Poster

This poster was entered into PGXPO, a research showcase and competition held at Birmingham City University. It was judged against work from 29 MSc students across all programs, and I was awarded 1st Place and the Research Excellence Award.

Click to view

Results

Multimodal eHMI outperformed single-modality cues

Across 10 older adult participants and 8 conditions, multimodal combinations consistently scored higher than single-modality cues for clarity, confidence, and perceived safety. Visual cues proved the most intuitive anchor, while haptic-only and audio-only conditions performed poorly when used in isolation.

A layered approach, visual as the primary cue with haptic or audio confirmation, was the strongest model for reducing ambiguity in AV yielding scenarios.

- Overall: C4 (Visual + Haptic) achieved the highest Overall UEQ score - strongest for clarity and engagement

- Why it worked: Visual cues already have precedent; haptics added reassurance without overload

- Scale leaders: C4 led on Perspicuity, Stimulation, and Novelty; C5 on Attractiveness and Efficiency; C7 on Dependability

- Note: Small sample (n=10) means findings are directional and descriptive - indicative of trends rather than statistically generalisable conclusions. Bonferroni correction was applied to account for multiple comparisons.

Design Recommendations

Guidelines for AV eHMI Design

- Use visual as the primary channel for communicating yielding intent.

- Add a secondary confirmation cue (haptic and/or audio) to reinforce confidence.

- Keep timing consistent and avoid cue overload; don't layer too much complexity.

- Design for multi-vehicle attribution so users can instantly tell which vehicle is communicating.

Next Steps

Validation and Further Research

- Test with a larger and more diverse sample (including different levels of tech familiarity).

- Validate in a more realistic environment (VR or controlled street-style setup).

- Explore more complex scenes (multiple vehicles, distractions, varied crossing contexts).

- Calibrate haptic intensity and audio volume to ensure accessibility without annoyance.

This study was academic in scope but the findings have direct product relevance. The recommended layered eHMI model maps onto real design decisions for AV interface teams, and older adults remain an underrepresented group in AV research. Designing eHMI with them in mind is both a usability and an ethical priority.

Acknowledgements:

- Thanks to Wenge Xu, Senior Lecturer at Birmingham City University, for his supervision and support throughout.

- Thanks to Dr. Debargha Dey, Assistant Professor at TU/e, for producing the video stimuli and audio and visual eHMI assets used in this study.